JPMorgan systematically addressed the ten core issues in the AI industry. China's AI sector is transitioning from price competition to a focus on model quality, with 'task completion rate' being far more decisive than 'token unit price' for customer retention in intelligent agent scenarios. The key to profitability lies in whether gross profit growth can consistently outpace R&D expenditure growth. Zhipu and MiniMax are both projected to turn profitable starting in 2029, and the overall market will not evolve into a winner-takes-all landscape.

China's artificial intelligence foundational model industry is transitioning from an "expectation-driven" phase to a "demand-driven" critical stage. In a recent research report, JPMorgan systematically addressed the ten core questions investors have about this industry, stating that model quality has become the primary variable determining market dynamics, and industry differentiation will accelerate.

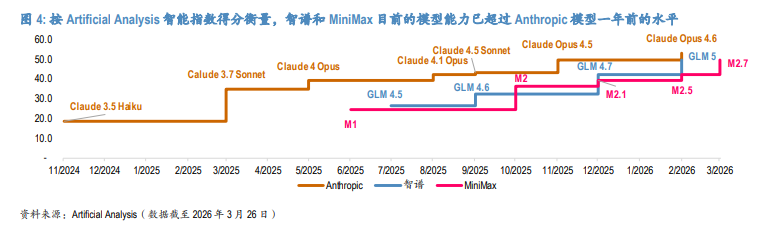

According to a report released by JPMorgan on March 27, the report highlights that China’s AI market is at a significant inflection point, with demand growth in coding and agent scenarios accelerating. Domestic models have reached levels close to or even surpassing those of leading U.S. models from a year ago, while localized pricing aligns better with economic benefits, both of which are improving deployment returns.

The year 2026 will be crucial for whether China’s enterprise AI demand can replicate the U.S. growth curve seen in 2025. Taking Anthropic as a reference, its annual recurring revenue (ARR) surged from $1 billion in December 2024 to $19 billion in March 2026, increasing approximately 19-fold within 15 months.

The year 2026 will be crucial for whether China’s enterprise AI demand can replicate the U.S. growth curve seen in 2025. Taking Anthropic as a reference, its annual recurring revenue (ARR) surged from $1 billion in December 2024 to $19 billion in March 2026, increasing approximately 19-fold within 15 months.

The Chinese market is well-positioned to follow a similar trajectory, particularly in the field of coding. $TENCENT (00700.HK)$ 、$Alibaba (BABA.US)$Internet giants such as ByteDance have integrated relevant tools into their existing ecosystems, driving demand from standalone demonstrations to full-scale deployment. The bank has maintained its "Overweight" rating on $KNOWLEDGE ATLAS (02513.HK)$ and $MINIMAX-W (00100.HK)$ , with target prices of HKD 800 and HKD 1100, respectively.

Question One: Will AI demand grow linearly, or will it explode at an inflection point?

Demand is driven by inflection points rather than linear growth.

As long as the model quality is sufficient to unlock real-world application scenarios, usage will shift from linear growth to an “exponential curve”-type explosion. The most compelling evidence comes from the U.S. market: Anthropic’s annual recurring revenue (ARR) soared from $1 billion in December 2024 to $19 billion in March 2026, growing nearly 19 times in just 15 months.

China currently possesses the foundational conditions for a similar explosion: domestic model capabilities have surpassed those of leading U.S. models from a year ago, and localized pricing aligns better with China’s labor economics, significantly improving the return on AI implementation.

On the agent side, OpenClaw has become a key catalyst, advancing use cases from single-turn interactions to multi-step task execution, significantly increasing token consumption per task. Internet giants such as Tencent, Alibaba, and ByteDance have integrated OpenClaw-related tools into their existing ecosystems, marking a shift from “developer experimentation” to “full ecosystem deployment.”

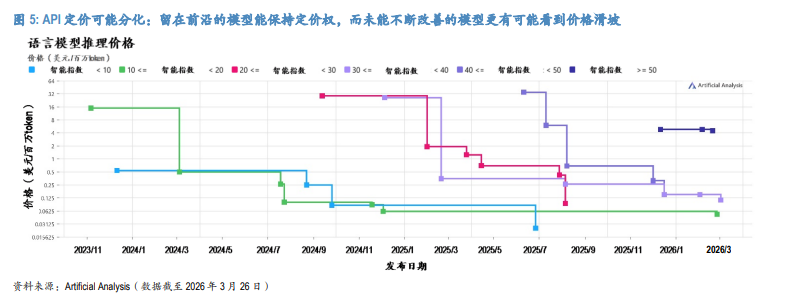

Question Two: Will API pricing rise, fall, or diverge?

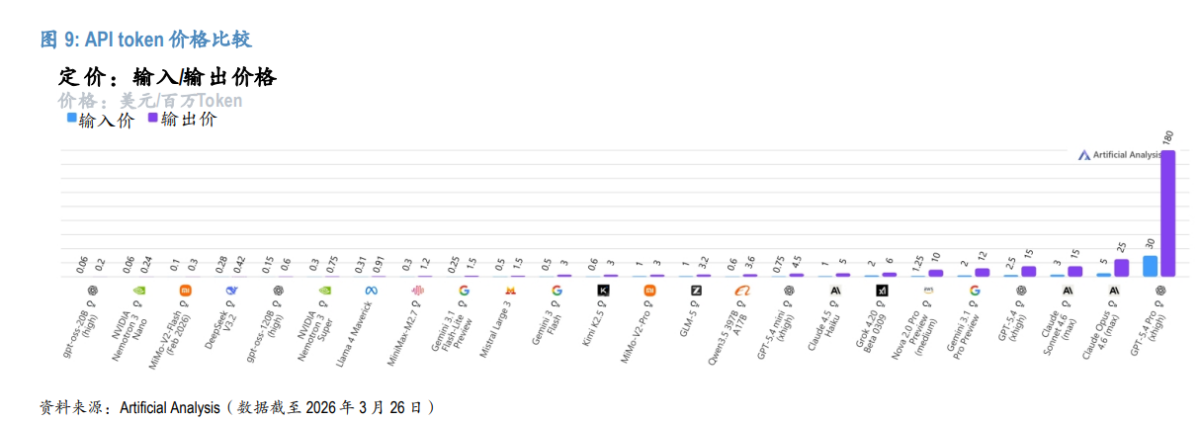

Pricing will not move in a single direction; divergence is the main theme.

On one hand, highly capable models establish pricing power. If a model can uniquely unlock high-value tasks (e.g., agent coding, long-duration workflows, enterprise-grade reliability), customers are willing to pay a premium because the return on investment can be quantified. On the other hand, as hardware and algorithmic efficiency continue to improve, the unit cost of inference will keep declining, exerting price pressure on models that fail to evolve.

The ultimate outcome will be a bifurcated pricing structure: models that consistently maintain cutting-edge capabilities can achieve both volume and price increases, while models that fail to iterate will face declining prices. Even if usage continues to grow, their profit margins will become uncertain.

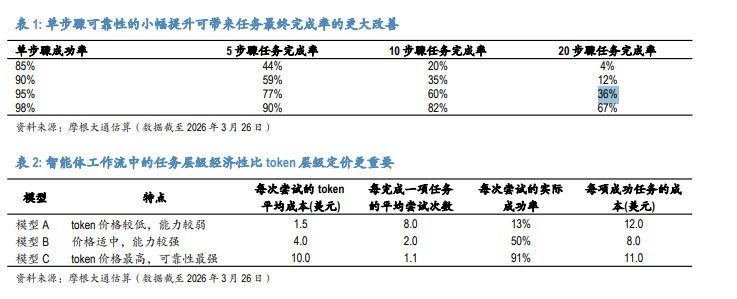

Question Three: If pricing is not the main battleground, where does competition focus?

The main battleground has shifted from token prices to model capabilities.

This represents a key change compared to last year—whereas the focus in the Chinese market in 2025 was an all-out price war, in today’s fastest-growing demand scenarios such as coding and agents, quality far outweighs per-unit cost.

In multi-step workflows, what customers are purchasing is not “cheap tokens” but “successful task completion.” The research report provides an intuitive mathematical example: if the success rate of a single step improves from 85% to 98%, the final completion rate of a 20-step task will surge from 4% to 67%. Under this logic, the model with the lowest per-token pricing may end up having the highest overall cost for completing each task.

The report also points out that companies with strong, leading-edge models can easily extend into lower-end markets, whereas companies relying solely on low prices will struggle to enter the high-end market.

Question Four: Why is the foundational large model industry still a “life-and-death struggle”?

Small technological gaps, endless iteration cycles, and converging monetization models—these three factors make the industry exceptionally brutal.

The capability gap between China's major large model companies is often smaller than investors anticipate, resulting in a highly unstable market. In this industry, 'standing still' does not represent a neutral outcome but rather signifies a loss of position—companies must continuously invest and iterate to avoid falling behind.

The convergence of business models has intensified the pressure of elimination. Both revenue growth and profit margins primarily depend on product strength, while switching costs remain low. This means that companies losing technological momentum will rapidly lose their commercial and financial defenses, leading to a gradual reduction in the number of truly reliable companies within the industry.

Question Five: What determines profitability?

The core issue is whether gross profit growth can consistently outpace the growth rate of R&D expenditures.

The fundamental economic model for token businesses is straightforward: Revenue = Token Usage × Price. The primary cost involves inference computation, with the largest operational expenditure being training-related R&D. As model efficiency and inference chip efficiency continue to improve, the gross margin for leading models should gradually increase.

However, the outlook for operating profits is more complex. Anthropic serves as a cautionary example: Even with monthly revenue reaching $14 billion by February 2026, the company announced a new round of financing amounting to $30 billion during the same period, emphasizing ongoing cutting-edge development—high revenue does not imply normalized training intensity.

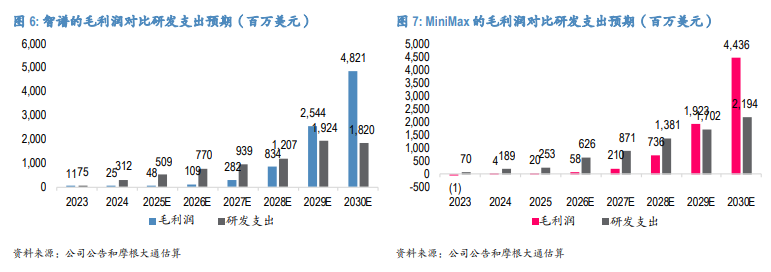

The baseline scenario is that both Zhipu and MiniMax are expected to turn profitable starting from 2029. The research report highlights that more important than specific profitable years are the tracking metrics: the sustained growth trend in usage and continuous improvement in unit economics.

Question Six: How should investors track model strength?

It is necessary to consider three dimensions: token price, usage volume, and third-party evaluations. A single metric is insufficient to provide a complete picture.

Token price: This is the most critical indicator, as it represents the company’s real-time positioning of its product in the market. The price difference relative to the best models is becoming a strong proxy for actual competitiveness.

Token Usage: Actual consumption reflects the genuine choices of users and developers. Third-party API aggregators like OpenRouter can serve as references, with particular attention to the growth of agent-based workloads, as these consume significantly more tokens per task than simpler workflows.

Third-party evaluations: Artificial Analysis provides structured assessments, while LMArena reflects real user blind preferences; the two are complementary, offering a more comprehensive external perspective.

Question Seven: With internet giants aggressively entering the B2B space, what is the future for independent model companies?

As competition boundaries converge, the ultimate focus remains on the comparison of model capabilities.

Alibaba has clearly identified cloud and AI as strategic priorities, deeply integrating model development with enterprise workflows. Tencent’s intelligent agent products now cover personal, developer, and enterprise scenarios comprehensively. OpenAI has also shifted its commercial focus to enterprise solutions and coding deployment. Leading companies share a common direction: AI is evolving from a "consumer-end feature" into a "tool that directly generates corporate revenue."

In this context, independent model companies can no longer rely solely on the "cloud neutrality" label to establish a competitive moat, and internet giants cannot fully compensate for shortcomings in model capabilities by leveraging ecosystem traffic advantages alone. When enterprises deploy AI, the core purchase criterion remains model quality—stronger coding reasoning abilities and more reliable workflow completion rates.

Question Eight: What factors determine a company’s survival?

Talent first, computing power second, and organization third—all three are indispensable.

Top research talent: This remains a research-driven industry. The technical judgment of senior leadership itself serves as a competitive factor, and whether management can make correct research direction decisions directly influences the company's technological trajectory.

Computing power and capital: Cutting-edge training costs are high, and the economic feasibility of inference depends on the quality of infrastructure. Weakness in acquiring computing power represents a structural disadvantage—not only affecting model training efficiency but also undermining the ability to respond to demand at a reasonable cost.

Organizational execution: In a rapidly iterating market, the ability to convert research findings into products, products into usage, and usage into monetization is almost as important as the model itself.

Question Nine: If everyone is improving, will models eventually converge?

Overall strength will converge but not become identical; the market will not form a winner-takes-all pattern.

Different companies have variations in architecture choices, training data, product focus, and technological approaches, which will continue to generate distinct competitive advantages. The research report suggests that in a still rapidly expanding market, multiple companies can grow simultaneously, even with some overlap in capabilities—currently, the significance of overall market expansion far outweighs premature concerns about commoditization.

In the long term, a more realistic market outcome is not 'one dominant player, all others out,' but rather the emergence of several truly capable companies, each excelling in specific areas, competing in a market large enough to support multiple winners. As AI extends from productivity tools to consumer-facing scenarios, differences in personal tastes, styles, and preferences will further reinforce this diversified landscape.

Question Ten: How should we understand open-source/closed-source models, model iteration, and global expansion risks holistically?

Iteration is mandatory, open-source/closed-source is a strategic choice, and the core risks of global expansion lie in computational power and compliance.

Regarding model iteration, the expected pace is approximately one flagship model generation per year (e.g., from GLM 4.7 to GLM 5, or MiniMax M2 series to M3 series), accompanied by minor upgrades driven by reinforcement learning. Halting iteration means losing competitive standing.

On the open-source/closed-source front, the research report argues that the answer is not binary. Closed-source models offer stronger commercial defensibility, reducing the risk of disintermediation; open-source fosters ecosystem development, boosts adoption rates, and accelerates technical feedback. Therefore, most Chinese model companies will ultimately adopt a hybrid strategy: keeping their latest and strongest models closed-source while open-sourcing certain other versions.

For global expansion, the biggest risk remains access to computational resources. Both training and inference heavily rely on high-performance chips, and tightened export controls will simultaneously slow down model advancements and weaken cost competitiveness. The second major risk involves data and security compliance: if model deployment, user services, and data storage can achieve overseas localization, cross-border data transmission issues become relatively manageable; however, local privacy laws and recognition of data access rights for China-related entities remain sources of uncertainty.

Looking to pick stocks or analyze them? Want to know the opportunities and risks in your portfolio? For all your investment-related questions,just ask Futubull AI!

Editor/Marvin