The 'Father of HBM' predicts that AI architecture will shift from GPU-centric to memory-centric — memory demands in the Age of Agent AI could increase a millionfold, pushing existing HBM technology to its limits. The next-generation HBF technology, based on stacked NAND, is expected to produce engineering samples by 2027 and be adopted by Google or NVIDIA by 2028. SK Hynix has already partnered with Sandisk to take an early lead in standard-setting, while Samsung is simultaneously advancing its own efforts.

As AI transitions from "generation" to "autonomous action," the bottleneck in computing power may shift from GPUs to memory.

According to local media reports, including those from the South Korean outlet Asia Economy, Joungho Kim, a professor at KAIST and widely regarded as the "father of HBM," recently made a prediction: the current GPU-centric AI architecture dominated by NVIDIA will eventually be replaced by a new architecture centered on memory.

Behind this assessment lies a fundamental shift in the form of AI applications. As generative AI evolves into agentic AI, systems will need to simultaneously process massive volumes of documents, videos, and multimodal data—Kim refers to this trend as the rise of "context engineering." He pointed out that to ensure speed and accuracy, memory bandwidth and capacity must increase by up to 1,000 times.

Behind this assessment lies a fundamental shift in the form of AI applications. As generative AI evolves into agentic AI, systems will need to simultaneously process massive volumes of documents, videos, and multimodal data—Kim refers to this trend as the rise of "context engineering." He pointed out that to ensure speed and accuracy, memory bandwidth and capacity must increase by up to 1,000 times.

Even more staggering are the figures on the demand side: according to an earlier report by Money Today Broadcasting citing Kim, if input scales expand by 100 to 1,000 times, memory requirements could surge exponentially, with total capacity potentially increasing by as much as one million times.

HBM will reach its ceiling, paving the way for HBF.

Kim explicitly stated that existing HBM technology—enabling ultra-high-speed transmission through vertically stacked DRAM and currently dominating the AI accelerator memory market—will become unsustainable in the era of agentic AI.

His proposed next-generation solution is HBF (High Bandwidth Flash): replacing DRAM with stacked NAND to construct a "giant bookshelf-like" long-term memory system with far greater capacity than current limits.

By analogy, HBM resembles a sticky note on a desk—fast but limited in capacity; HBF, on the other hand, is akin to an entire wall of books, capable of storing information on a completely different scale.

At the architectural level, SK hynix has proposed the "H3" architecture in a paper published by IEEE—according to a February report by Korea Economic Daily, this architecture places HBM and HBF side by side next to the GPU, unlike the current design where only HBM is positioned adjacent to the processor. This implies that the role of the GPU will transition from being the "main character" to a "supporting role," with computational units embedded within a memory-centric system.

The timeline is becoming increasingly clear.

According to Kim's forecast, engineering samples of HBF are expected to emerge around 2027, with Google, NVIDIA, or AMD potentially adopting the technology as early as 2028.

This timeline bears a strong resemblance to the path HBM took from laboratories to large-scale commercial use, indicating that the industry window has now opened.

SK Hynix and Samsung are set for another head-to-head confrontation.

Kim also pointed out that the competitive landscape in the HBF field will follow the same pattern as in the HBM era, with SK Hynix ( $CSOP SK Hynix Daily (2x) Leveraged Product (07709.HK)$ ) and Samsung Electronics ( $CSOP Samsung Electronics Daily (2x) Leveraged Product (07747.HK)$ ) once again taking center stage.

Currently, SK Hynix has established an HBF standardization alliance with Sandisk this February, aiming to seize leadership in the ecosystem. Meanwhile, Samsung is advancing its next-generation HBM products, such as HBM4E, while simultaneously investing in NAND architecture research aligned with the HBF concept, according to Aju News.

The two giants have adopted different strategic paths, but both are targeting the same market. Whoever completes the closed loop from standard-setting to mass production and delivery first will, to a large extent, shape the landscape of the next phase of the AI memory market.

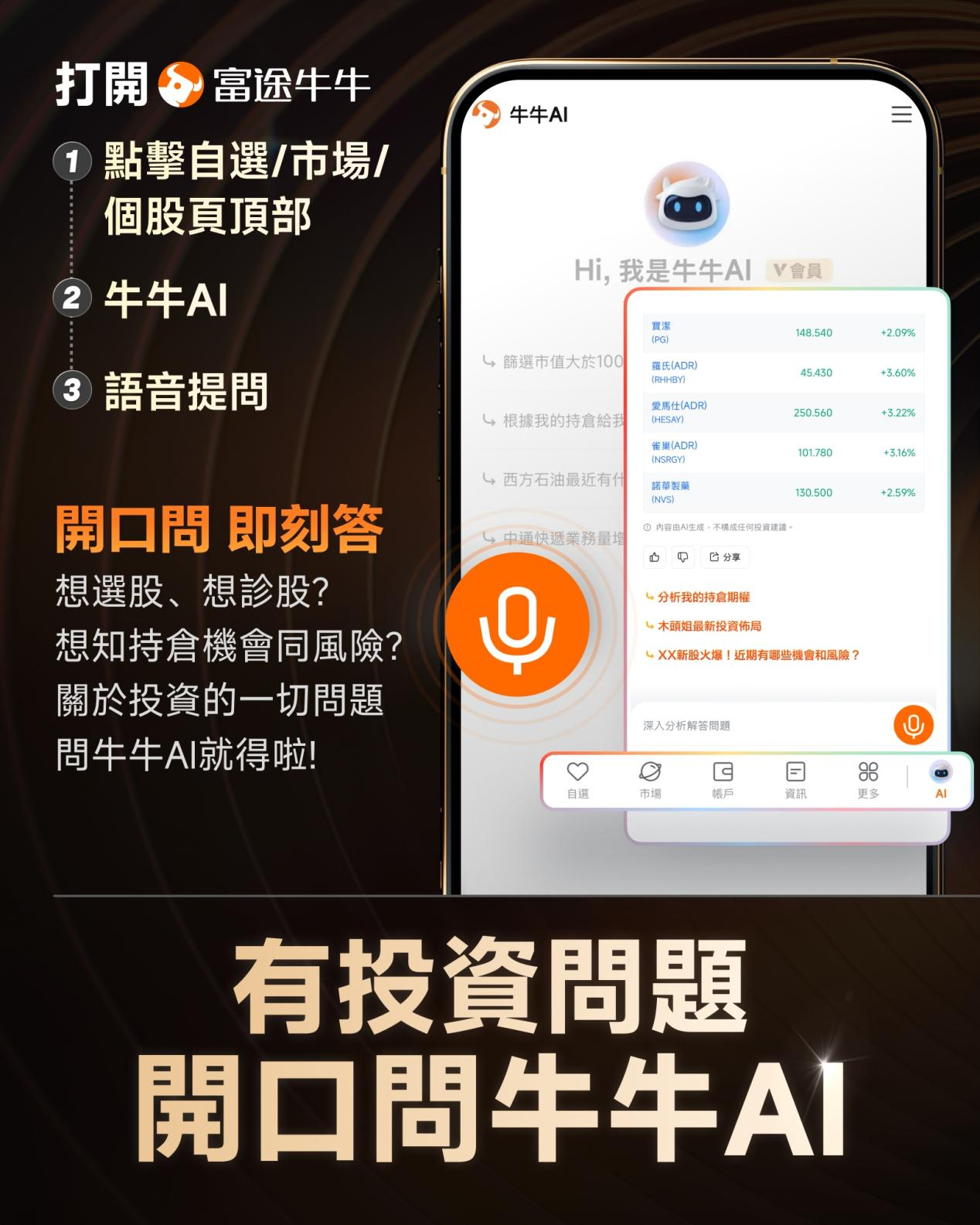

Looking to pick stocks or analyze them? Want to know the opportunities and risks in your portfolio? For all your investment-related questions,just ask Futubull AI!

Editor/joryn