Microsoft and Google simultaneously launched new-generation AI models today. Microsoft released the MAI series of models, which cover capabilities such as speech transcription, speech generation, and text-to-image creation, and will accelerate their integration into product systems like Copilot. Google, on the other hand, introduced Gemma4, an open-source model that supports local operation and possesses reasoning, code generation, and multimodal capabilities, licensed under Apache 2.0. Both companies are further advancing the upgrade of AI capabilities, expanding application scenarios.

$Microsoft (MSFT.US)$and$Alphabet-A (GOOGL.US)$On Thursday, a new generation of AI models was released simultaneously, further strengthening the layout of multimodal capabilities. Microsoft launched its self-developed MAI series foundational models, covering speech transcription, speech generation, and image generation, and accelerated their integration into its own product ecosystem; Google, on the other hand, released the Gemma 4 open-source model, which focuses on local operation and multimodal capabilities, and switched its licensing to the more open Apache 2.0 agreement.

Microsoft: Three MAI Models Covering Speech and Image Capabilities

$Microsoft (MSFT.US)$The 'world-class' self-developed MAI models launched by Microsoft include three in total:

First is MAI-Transcribe-1, an "advanced" speech-to-text model capable of understanding the 25 most widely used languages globally. Its batch transcription speed is 2.5 times faster than Microsoft's existing Azure Fast solution. The starting price for MAI-Transcribe-1 is $0.36 per hour.

First is MAI-Transcribe-1, an "advanced" speech-to-text model capable of understanding the 25 most widely used languages globally. Its batch transcription speed is 2.5 times faster than Microsoft's existing Azure Fast solution. The starting price for MAI-Transcribe-1 is $0.36 per hour.

Next is MAI-Voice-1, a new voice generation model that can generate 60 seconds of audio in just one second. Additionally, it supports creating custom voices in Microsoft Foundry using short audio samples. The starting price for MAI-Voice-1 is $22 per million characters.

Finally, there is MAI-Image-2, a faster text-to-image model that has already been rolled out in Copilot and will soon be applied across Bing and PowerPoint. The pricing for MAI-Image-2 is $5 per million tokens (Token) for text input and $33 per million tokens for image output.

Currently, all three models have been fully launched on Microsoft Foundry, with the speech transcription and voice generation models also available on MAI Playground. These models were developed by Microsoft's MAI Super Intelligence Team, led by Mustafa Suleyman, CEO of Microsoft AI, which was established and publicly announced in November 2025.

$Microsoft (MSFT.US)$stated:

"We are rapidly deploying these top-tier models to support our consumer and commercial products. Soon, you will see more models integrated into Foundry as well as various Microsoft products and experiences."

Media analysis suggests that this release indicates that although Microsoft maintains a close partnership with OpenAI, the company is actively advancing the construction of its own multi-modal AI model system while competing with other AI research institutions.

However, Suleiman reiterated in an interview with the media that Microsoft will continue its partnership with OpenAI. He also indicated to the press that recent renegotiations of their cooperation have enabled Microsoft to truly advance its superintelligence research.

Microsoft has invested over $13 billion in OpenAI and integrated its models into a variety of its own products through a multi-year collaboration. In the semiconductor sector, Microsoft employs a similar strategy: developing chips in-house while also purchasing products from external suppliers.

Google: The Gemma 4 open-source model emphasizes local execution and multimodal capabilities.

$Alphabet-A (GOOGL.US)$The Gemma 4 open-source model released by Google adopts the Apache 2.0 license, instead of the previously customized Gemma licensing agreement. Google stated that these models possess advanced reasoning capabilities, agent-based workflows, code generation, as well as vision and audio generation capabilities, and are provided in four different versions optimized for local operation, even capable of running on 'billions of Android devices'.

Google stated:

"Gemma 4 is built on the same world-class research and technology as Gemini 3 and represents the most powerful series of models currently available for execution on local hardware. They complement our Gemini models, providing developers with the industry’s strongest combination of open-source and proprietary tools."

"This open-source license provides developers with complete flexibility and a foundation of digital sovereignty, allowing full control over data, infrastructure, and models. You can freely build and securely deploy in any environment, whether on-premises or in the cloud."

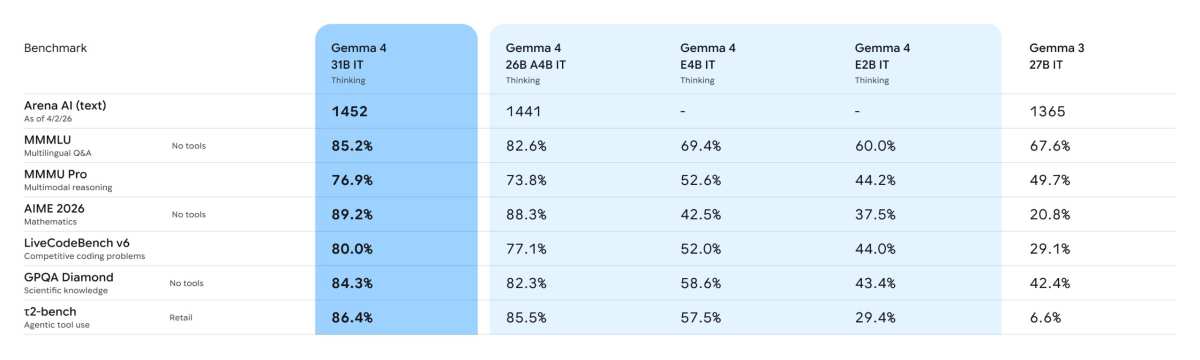

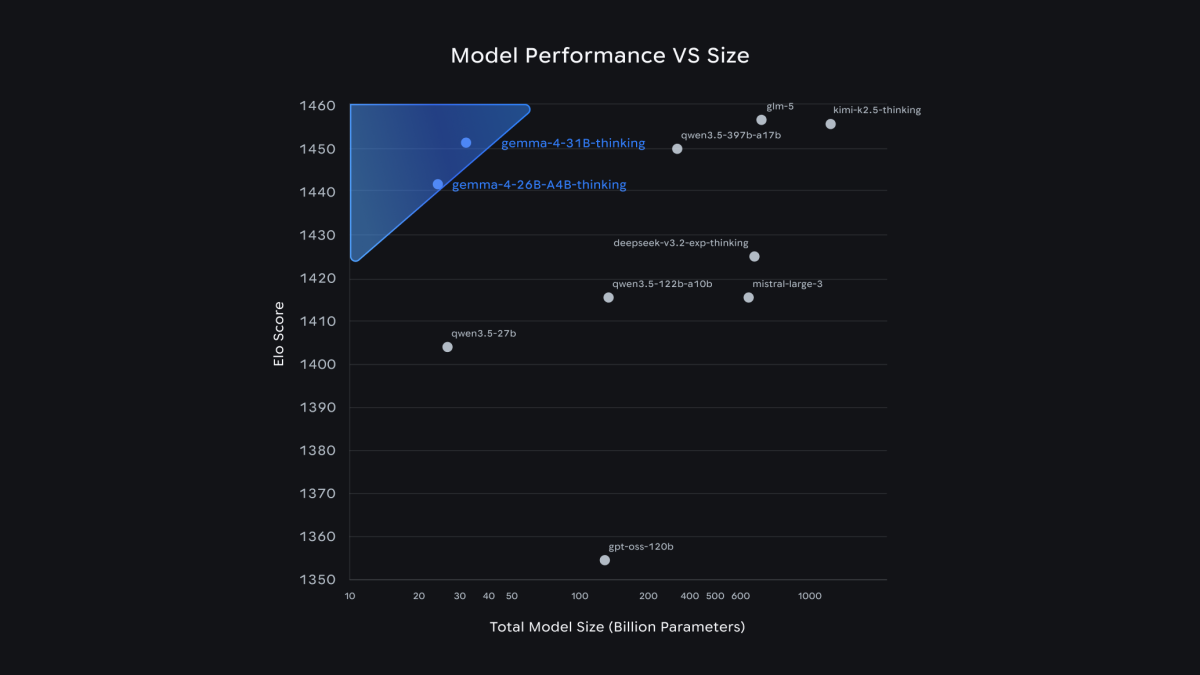

The main differences among the four versions lie in parameter scale. For edge devices (including smartphones), the company introduced 'Effective' models with 2 billion and 4 billion parameters, focusing on multimodal capabilities and low-latency processing suitable for mobile and IoT devices. For more powerful hardware, it offers a 26-billion-parameter 'Mixture of Experts' model and a 31-billion-parameter 'Dense' model, designed to run on consumer-grade GPUs and capable of driving IDEs, programming assistants, and agent-based workflows. These models also support fully offline operation.

$Alphabet-A (GOOGL.US)$It was stated that an 'unprecedented level of intelligence per parameter unit' has been achieved with Gemma 4. To substantiate this claim, the company pointed out that the 31-billion and 26-billion parameter versions of Gemma 4 ranked third and sixth respectively on the Arena AI text leaderboard, surpassing models that are 20 times larger in scale.

All of these models can process videos and images, making them highly suitable for tasks such as optical character recognition. The two smaller models also support audio input processing and speech understanding. Additionally, Google stated that the Gemma 4 series supports offline code generation, enabling users to program without an internet connection (e.g., for 'vibe coding'). These models also support over 140 languages.

$Alphabet-A (GOOGL.US)$Google's Gemma 4 open-source model can be downloaded on multiple platforms, including Hugging Face, Kaggle, and Ollama. Google emphasized:

"These models adhere to the same stringent security protocols in terms of infrastructure safety as our proprietary models."

Editor/Melody